Topics: Artificial Intelligence, Military

Topics: Artificial Intelligence, Military

With the creators of many artificial intelligence systems still in the 'move fast and break things' stage of technological advancement, this week one leading AI firm has shown that there is at least one major red line they will not cross, no matter the pressure.

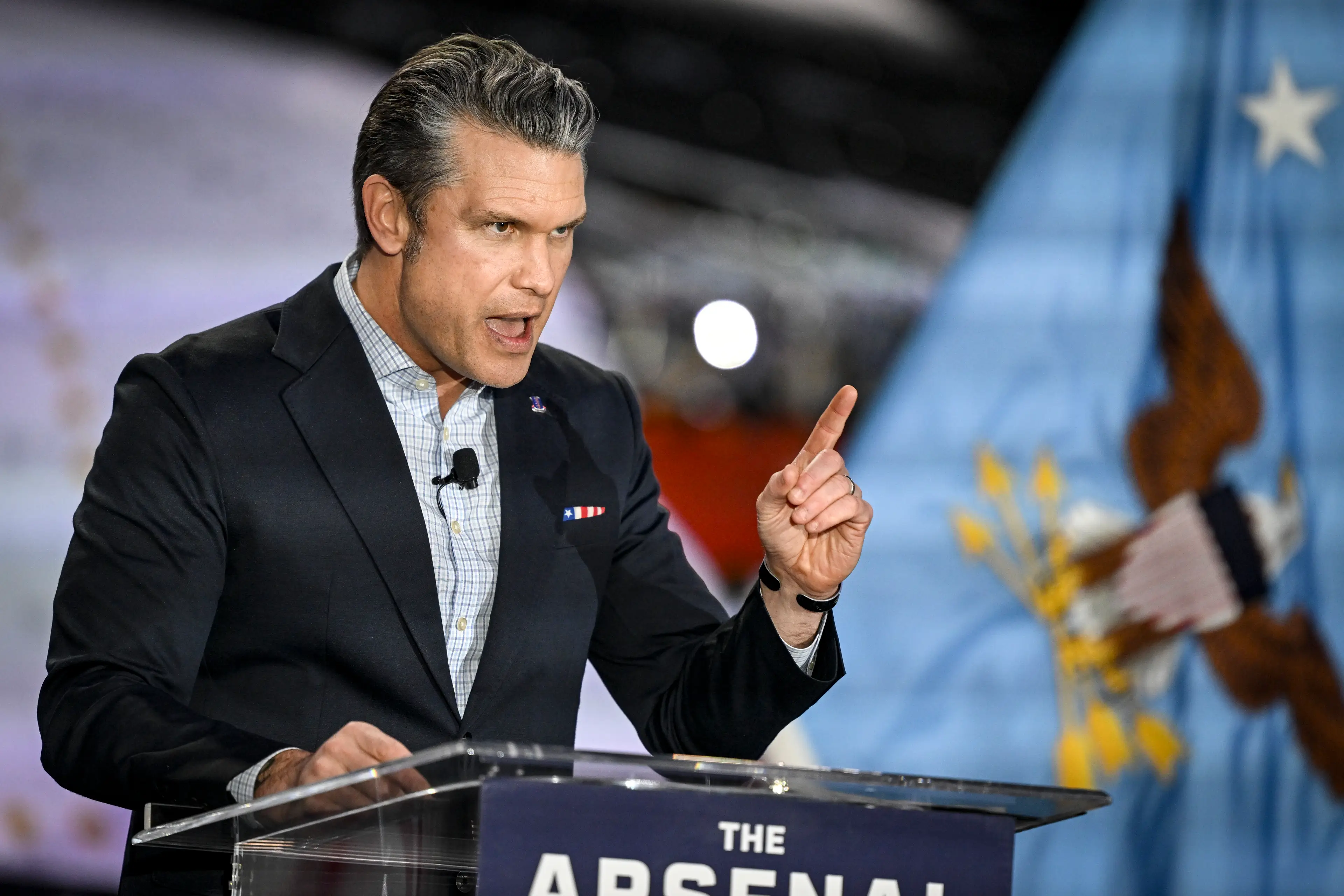

Anthropic, the company behind the powerful Claude AI, has stated that it 'cannot in good conscience' agree to forceful demands handed down by the Pentagon and Secretary of Defense Pete Hegseth that would require the firm to give the military unfettered use of its systems.

That is because of the company's established moral and ethical 'red lines', which include never using their AI technology to enable the mass surveillance of US citizens or in the creation of terrifying fully autonomous weapons systems.

But in an unprecedented attempt to put the screws on a US firm, the Department of Defense (DOD) is threatening to brand the booming AI business a 'supply chain risk' if it does not give in to its demands, a move which would bar many companies from working with Anthropic's technology.

Advert

This move, usually reserved for foreign entities that undermine the US, could have devastating consequences for the five-year-old company.

In addition to losing other contracts due to being labeled a 'supply chain risk', the DOD could also cancel a $200 million contract to use Claude if Anthropic does not allow the military to use it 'for all lawful purposes', Secretary Hegseth warned Anthropic CEO Dario Amodel on Tuesday.

But the Pentagon's claims about only using the groundbreaking AI technology for 'lawful purposes' did not convince Amodel, with Anthropic later putting out a statement calling the use of language 'legalese' that would ultimately 'allow those safeguards to be disregarded at will.'

After months of failed behind-closed-doors meetings with Anthropic to try and twist its arm into agreeing unfettered military use of its technology, Hegseth has handed the firm a cliff-edge deadline to agree to the DOD's demands.

Axios has reported that Amodel has until the end of today to agree, or face the consequences.

Reacting to this unprecedented pressure from the Pentagon on a US firm, Amodel posted a long statement on Thursday laying out that Anthropic's opposition is not to the US military, but to the disregarding of their ethical red lines.

He said: “Our strong preference is to continue to serve the Department and our warfighters – with our two requested safeguards in place. We remain ready to continue our work to support the national security of the United States.”

Amodel explained that his firm did not want to be involved in the DOD's decision making process, but instead gave a chilling warning about what a world without AI guardrails could look like, stating that 'in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values.'

Shockingly, one of the government negotiators involved in the months of talks with Anthropic responded by blasting its CEO as a 'liar' who wants to control the armed services.

Emil Michael, the Pentagon’s Undersecretary for Research and Engineering, said on X: "It’s a shame that Dario Amodel is a liar and has a God-complex. He wants nothing more than to try to personally control the US Military and is ok putting our nation’s safety at risk.

"The Department of War will ALWAYS adhere to the law but not bend to whims of any one for-profit tech company.”