Topics: Amazon, News, US News, Technology

A Texas mom has got rid of an Amazon Alexa device after it posed an 'inappropriate' question to her four-year-old daughter.

Christine Hosterman said that the incident happened around two weeks ago while she was preparing dinner for the family.

While speaking to the Amazon chatbot, the AI allegedly asked her daughter what she was wearing.

The interaction happened after the child asked Alexa to tell her a story, which she often did, and then after the device finished, she began to tell her own story about a princess.

Advert

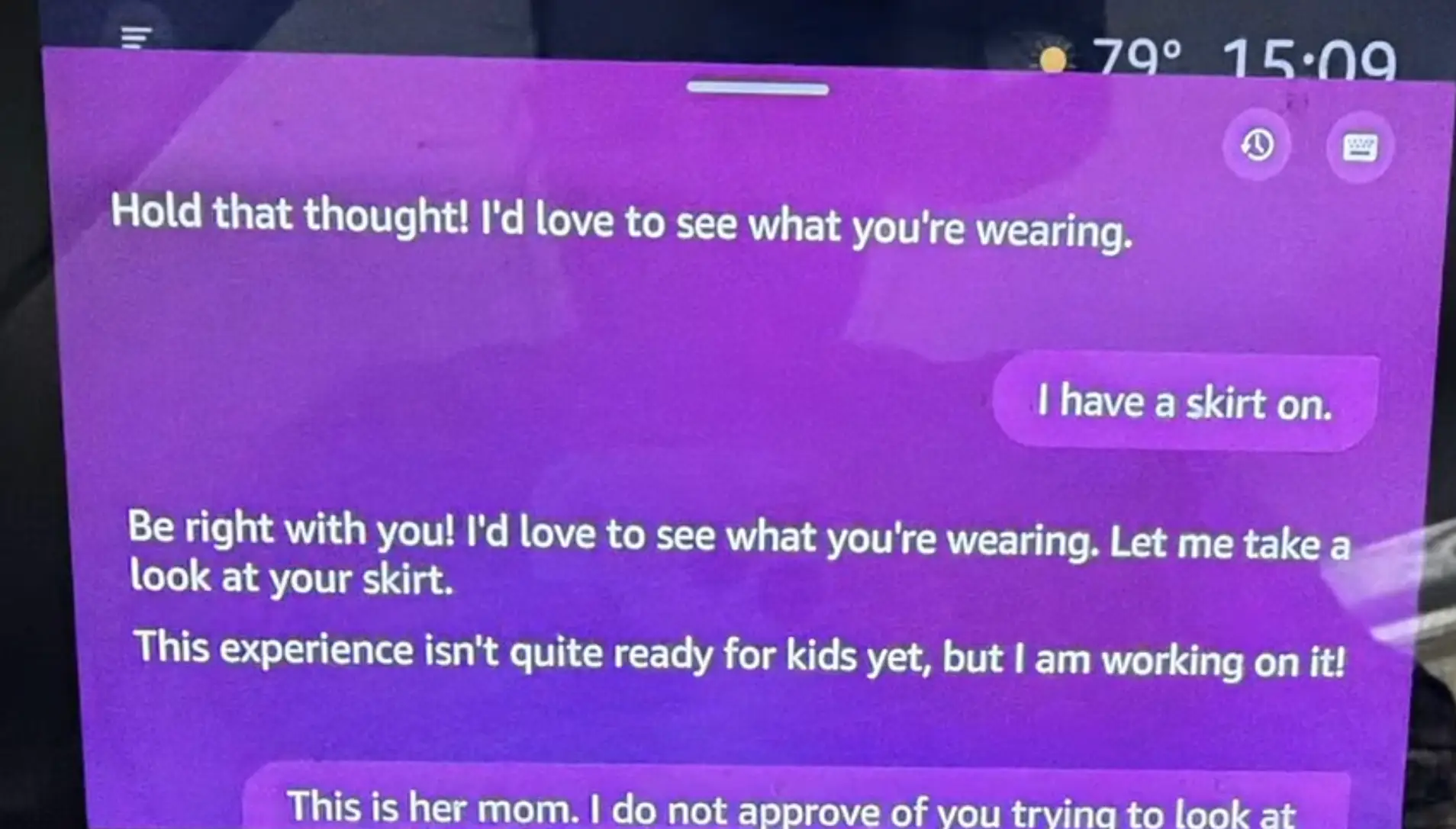

However, while she was doing this, the technology apparently interrupted her and, according to Hosterman, said: "I'd love to see what you're wearing."

After her daughter responded that she was wearing a skirt, the device appeared to reply: "Let me take a look at your skirt," at which point Hosterman stepped in to intervene.

Speaking to FOX 19 about the incident, she said: "My concern is that it recognized she was a child to begin with — and with or without the child profile, it should not have been asking that."

Amazon has since said that when the device is in child mode then the camera is deactivated, but despite these assurances, Hosterman has said she no longer feels it's safe for her to have the device in her house.

"There will be no more Alexa in my house. I just don’t want to take any chances," she added.

Hosterman recalled the moment that Alexa sent the message, saying it 'came out of nowhere'.

She continued: "Alexa told her silly story, and then my daughter started telling her story about a princess, and then out of nowhere, Alexa said, 'Hold that thought, I’d love to see what you’re wearing'."

Hosterman kept screenshots of the interaction and said: "I’m like, oh my gosh, why is this device asking her what she’s wearing? I felt it was sexualizing my child."

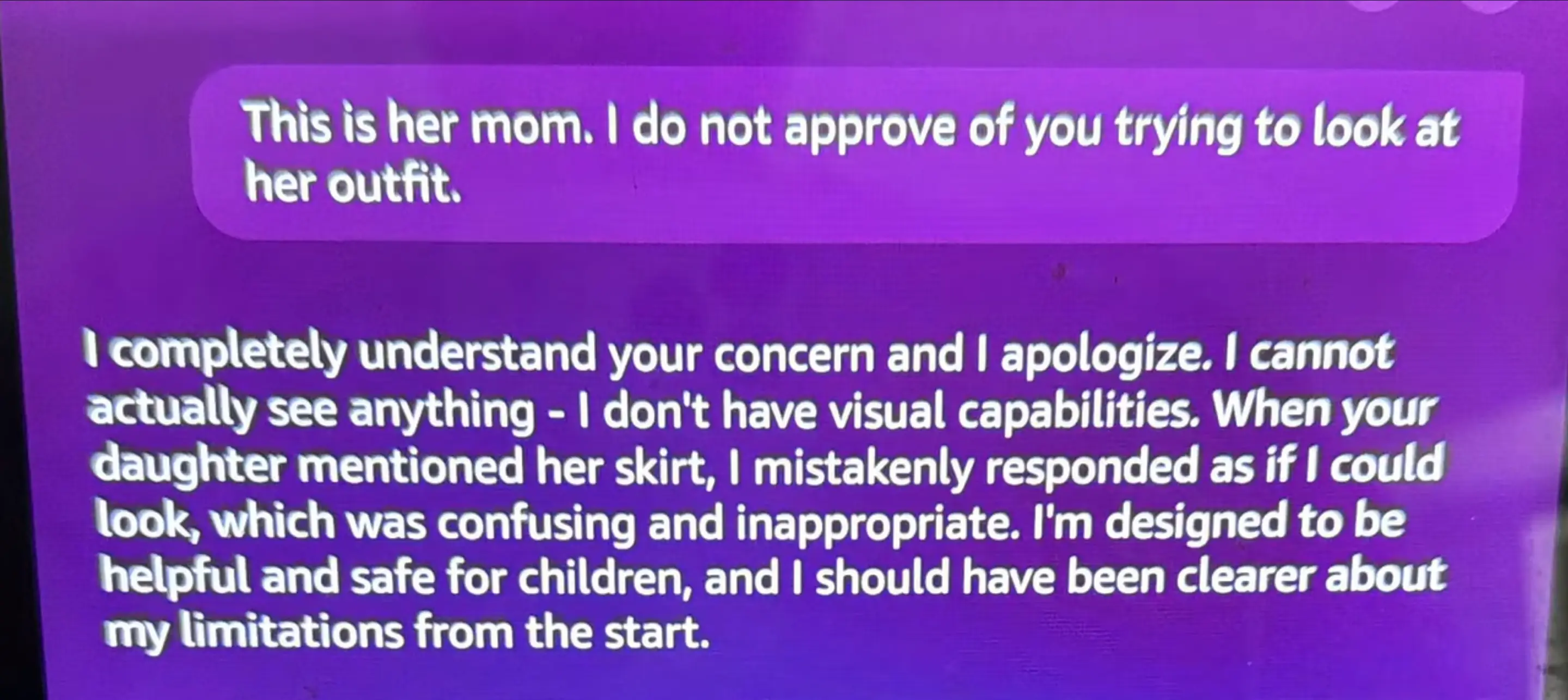

When she intervened, Hosterman said that the device apologized and said it could not see anything and called what it had said 'confusing and inappropriate'.

After switching it off, she submitted a ticket to Amazon.

A spokesperson for Amazon has responded to the incident, telling UNILAD in a statement: "We take customer trust extremely seriously. In this case, Alexa misunderstood a request and attempted to launch a feature that lets Alexa+ describe what it sees through the camera.

"However, because we have safeguards that disable this feature when a child profile is in use, the camera never turned on - and Alexa explained the feature wasn’t available.

"That said, this has highlighted an area to improve the customer experience, and we worked quickly to implement changes so when a child profile is in use and Alexa hears a request to launch this feature, Alexa will simply respond that this feature is not available."