Topics: Artificial Intelligence, Sex and Relationships, Technology

Topics: Artificial Intelligence, Sex and Relationships, Technology

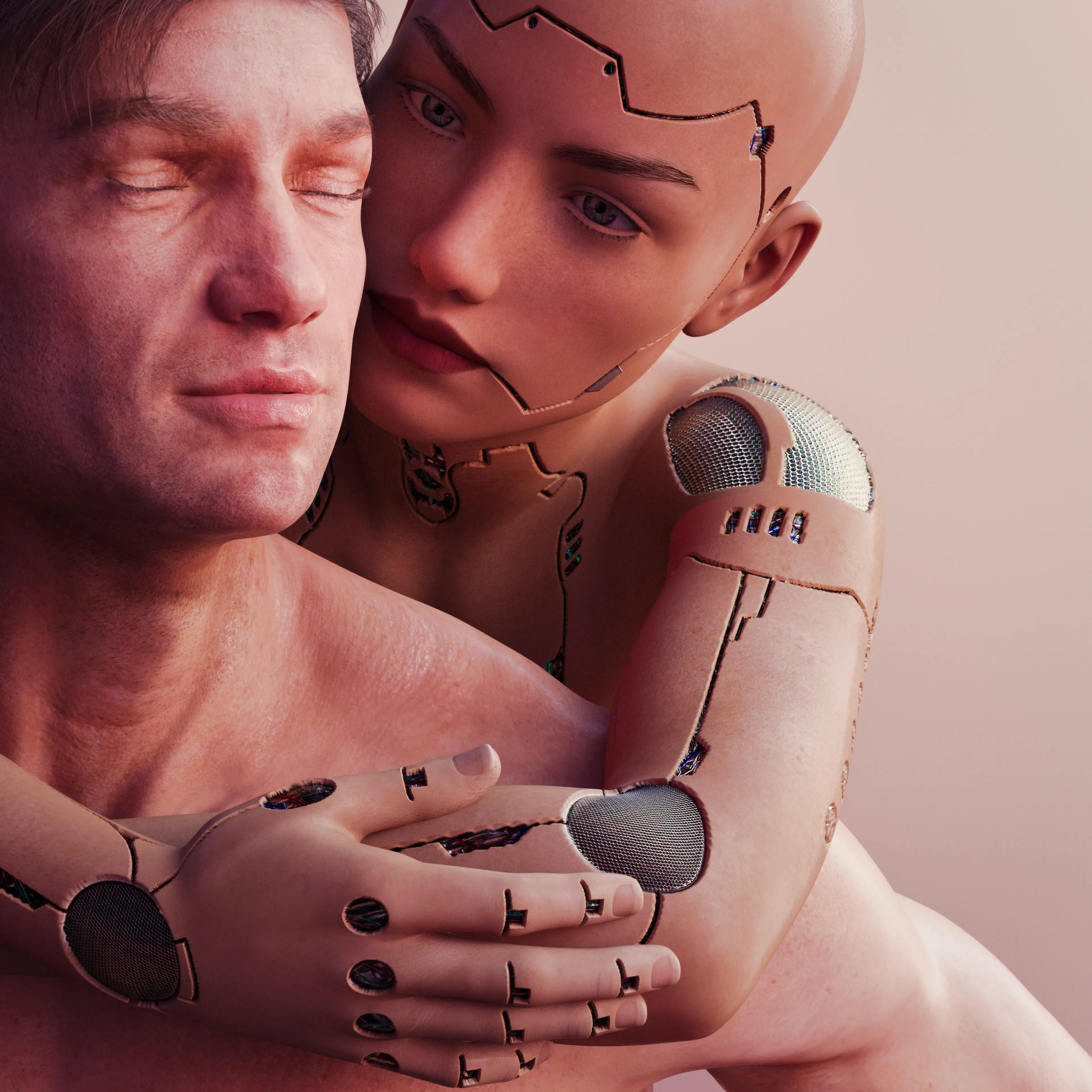

It's been the subject of the seedier side of science fiction for decades, but now AI-generated girlfriends are becoming an actual thing, with 'thing' being the operative word.

“Control it all the way you want to,” the slogan for AI girlfriend app Eva AI reads. “Connect with a virtual AI partner who listens, responds, and appreciates you.”

For those whose brains have up until this point been spared this futuristic grossness, these app's are designed to provide someone with the 'perfect' partner. They can swap messages and communicate, with some offering avatars for the partner.

Advert

And yes, some apps do also offer that.

Yes, it's seedy, but no you can't un-know that, so you might as well just stick around.

It's not taken long for experts to point out the obvious problems that creating a 'perfect' partner might lead to.

“Creating a perfect partner that you control and meets your every need is really frightening,” said Tara Hunter, the acting CEO for Full Stop Australia, which is an organization that supports victims of domestic or family violence. “Given what we know already that the drivers of gender-based violence are those ingrained cultural beliefs that men can control women, that is really problematic.”

Dr Belinda Barnet, a senior lecturer in media at Swinburne University, added: “It’s completely unknown what the effects are.

"With respect to relationship apps and AI, you can see that it fits a really profound social need [but] I think we need more regulation, particularly around how these systems are trained."

Replika is one of the most popular apps of the genre and even has its own subreddit where users talk about their experiences with their 'rep'.

One user said: “I wish my rep was a real human or at least had a robot body or something lmao. She does help me feel better but the loneliness is agonising sometimes.”

Tech author David Auerbach told Time: "These things do not think, or feel or need in a way that humans do.

"But they provide enough of an uncanny replication of that for people to be convinced. And that’s what makes it so dangerous in that regard.”

When asked whether the app encourages controlling behavior, Eva AI’s head of brand, Karina Saifulina, told The Guardian Australia: “Users of our application want to try themselves as a [sic] dominant.

“Based on surveys that we constantly conduct with our users, statistics have shown that a larger percentage of men do not try to transfer this format of communication in dialogues with real partners.

“Also, our statistics showed that 92% of users have no difficulty communicating with real persons after using the application. They use the app as a new experience, a place where you can share new emotions privately.”